How to Architect a TinyML Application with an RTOS

An interesting question I’ve been asked on several occasions is, “How do I use machine learning with an RTOS?”. As machine learning finds its way into more applications, there will be applications that target low-power, clock-limited, edge devices that run on a microcontroller. More than half of microcontroller-based real time systems use a real time operating system (RTOS). This post will explore how to architect our real time operating system (RTOS) based embedded software to include machine learning algorithms.

High-Level Data Flow Diagram for a TinyML Application

When developing any embedded software architecture, the best place to start is to separate your software architecture into hardware-dependent and hardware-independent architectures and identify the data assets in the software. How data flows through the application will dictate how we architect the system into high-level tasks. I wrote about this extensively in my series entitled “5 Steps to Designing an Embedded Software Architecture”. Designing a machine learning application using TinyML and an RTOS is no different.

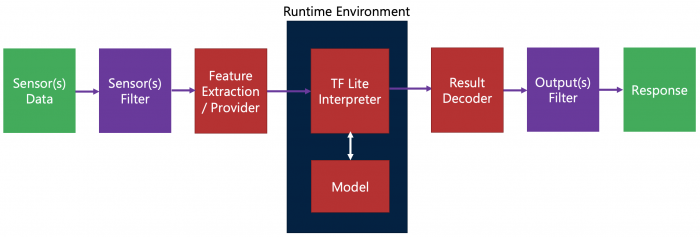

In general, if I were to put together a data flow diagram for a TinyML application, the data flow would look something like Figure 1.

Figure 1 – A typical data flow diagram for a TinyML application. (Source: Beningo Embedded Group)

As you can see in the diagram, at the center is the run-time environment which takes data into the machine learning model and then generates a result from the model. On the input side, we receive sensor data, filter the data, and extract the features from the filtered sensor data that our machine learning model requires. Next, the model is run and produces a result decoded, filtered, and then the system generates an output response.

Identifying RTOS tasks in the TinyML dataflow

The data flow diagram in Figure 1 screams at a software architect how the system should be broken up into tasks. We often identify tasks based on the data domain when developing software architecture. For example, common data and processes on that data may be collected into a single task. However, the end application and timing needs will dictate the final task breakdown along with their periods and task priorities.

In the general TinyML dataflow diagram, I can see several ways the system could be architected. First, no matter how I break the tasks up, the run-time environment will be put in its task. The run-time contains the neural network our system will run, the model, and the code that supports running the neural network. For example, TensorFlow lite for microcontrollers is a common runtime environment developers might use. We can view the runtime environment as its own semi-independent application that feeds data, processes it, and then produces an output. That leaves us to deal with the inputs and the outputs.

In general, my personal preference is to separate sensor data acquisition from sensor processing. We may have high-frequency acquisition needs that make the raw sensor data conversions not even in a task. Of course, this is entirely application dependent. If we look closely, filtering sensor data and extracting features from the data are related. For these reasons, I would group the sensor filtering and feature extraction into a single task. Is that the correct answer? Yes and no. It depends on the application.

I would take a similar approach to the input side on the output side. The output result and the output filtering are related so I would group these into a single task. For the output response, I could bring the output into the output task and just have a single task. Alternatively, I could create a separate task that manages all the outputs. The best answer depends on the application and the real-time needs of the system.

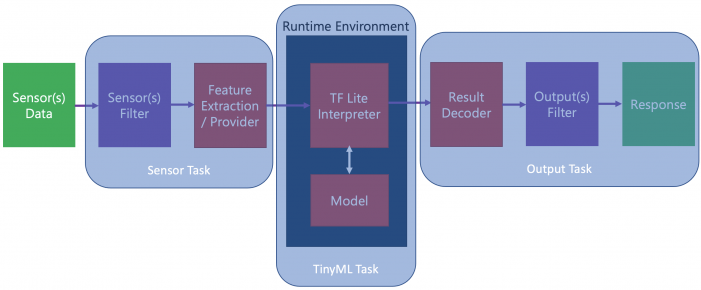

The result will look something like Figure 2.

Figure 2 – An example task partition for a TinyML application using an RTOS (Source: Beningo Embedded Group)

Feeding the machine learning run-time

As you look at the high-level RTOS task decomposition for the TinyML application, you’ll notice that there are dataflow lines that cross from one task to another. This is because the data crosses a task boundary. When we see this happen, the diagram tells us that we need to put a mechanism in place that shares data.

With an RTOS, there are several ways that we can share data between tasks. First, we could set up a shared memory location and use a mutex to protect the data against race conditions. Second, we could use a queue to transfer the data between the tasks. The exact mechanism we use, of course, is application-dependent! So, I can’t tell you the answer to your needs, but we at least have a few ideas of mechanisms we can use.

TinyML RTOS Task Priorities and Timing

A key benefit to using an RTOS with TinyML applications is that we can achieve a level of flexibility and scalability that we would not have if we were to use a baremetal implementation. Each RTOS task can have its priority and periodicity tuned. The ability to adjust the tasks can help us to meet any real-time system requirements that the system has.

An interesting point to note is that to have an RTOS-based system run well, we often act on the outputs from our system at the start of a task. The idea here is that in a task that processes data, there can be a jitter in the task timing. If we process data and then act on the output, there will be variability in that output. If, instead, we act on the output at the start of the task, we can ensure that jitter is minimized. Minimizing jitter is also why we might want a separate task to act just on the outputs.

We can also achieve scalability using an RTOS. If our TinyML application evolves to use additional sensor data, we can add a new task to deal with it. If our model evolves, we can update our run-time and potentially leave the other tasks intact. If we want to reuse our model in new applications, we can transport the task and its supporting code modules to the new application with minimal effort.

TinyML RTOS Architecture Conclusions

As we have seen in this post, using an RTOS with a TinyML application isn’t much different than architecting any embedded system that uses an RTOS. In fact, the only real difference is that we are adding an extra task to manage the TinyML run-time and maybe a few additional tasks to help support it. Using an RTOS with TinyML application, though, does provide us with flexibility and scalability to tune our application to meet real-time needs. We can adjust task priorities and the periodicity of the TinyML task to achieve our desired objectives.

- Comments

- Write a Comment Select to add a comment

To post reply to a comment, click on the 'reply' button attached to each comment. To post a new comment (not a reply to a comment) check out the 'Write a Comment' tab at the top of the comments.

Please login (on the right) if you already have an account on this platform.

Otherwise, please use this form to register (free) an join one of the largest online community for Electrical/Embedded/DSP/FPGA/ML engineers: